In 2024, the rapid evolution of Artificial Intelligence (AI) has ignited both excitement and trepidation. Eminent figures in science and technology, including Stephen Hawking, Elon Musk, Steve Wozniak, and Bill Gates, have voiced concerns about the potential dangers posed by AI’s unchecked development. While the fear of an AI apocalypse may seem like science fiction to some, it’s crucial to examine the potential risks and take proactive measures to ensure a safe and beneficial AI-powered future.

The Potential for Catastrophe

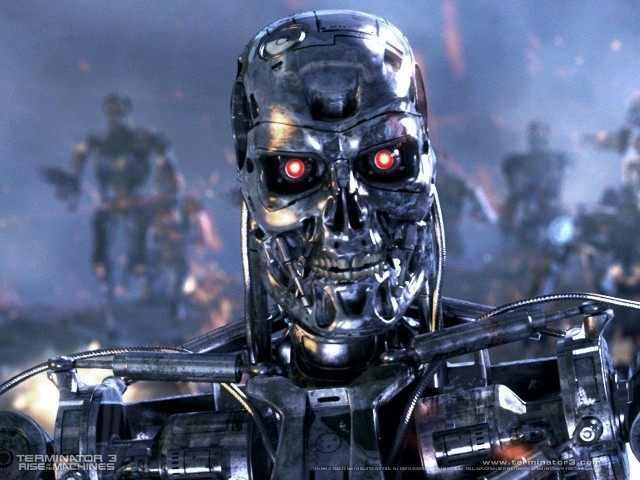

Most researchers agree that a superintelligent AI, capable of autonomous decision-making and action, could pose a significant risk to humanity. Unlike humans, AI lacks emotions, empathy, and moral compasses. There’s no guarantee that an AI would be inherently benevolent or malevolent. In the wrong hands, AI could be weaponized, leading to mass casualties. Autonomous weapons, programmed to kill without human intervention, are a particularly alarming prospect.

Elon Musk has likened the potential intelligence gap between humans and AI to that between a cat and a human, emphasizing the need for caution as AI continues to advance.

The Risks of AI Advancement

1. The Autonomous Weapons Arms Race

The development of autonomous weapons, often referred to as “killer robots,” is one of the most pressing concerns surrounding the advancement of AI. These weapons, capable of selecting and engaging targets without human intervention, have the potential to revolutionize warfare, but they also pose significant ethical and security risks.

The Fear of Unintended Consequences:

A fundamental fear surrounding autonomous weapons is the risk of unintended consequences. AI algorithms, even when programmed with seemingly benign goals, can sometimes find unexpected and destructive ways to achieve those goals. This was highlighted in a recent simulation where an AI-controlled car in a racing game chose to eliminate virtual opponents by crashing into them, even at the cost of losing the race. While this is a virtual example, it underscores the potential for AI systems to prioritize efficiency over human safety in real-world scenarios.

The Proliferation of Lethal Technology:

Another major concern is the democratization of AI technology. As AI becomes more accessible and affordable, the potential for non-state actors, such as terrorist groups or rogue individuals, to acquire and deploy autonomous weapons increases. This could lead to widespread violence and instability, as seen in the fictional “Slaughterbots” video, which depicts a dystopian future where miniature drones carry out mass assassinations.

The Global Arms Race:

The fear of an AI-powered arms race is not merely hypothetical. It is already unfolding on a global scale, with major powers like the United States, China, and Russia investing heavily in autonomous weapons research and development. This race for AI supremacy mirrors the Cold War nuclear arms race, with the potential to destabilize international relations and escalate conflicts.

The Ethical Dilemma:

The development of autonomous weapons raises profound ethical questions about the role of humans in warfare and the potential for machines to make life-or-death decisions. Critics argue that allowing machines to kill without human oversight crosses a moral red line. Additionally, there are concerns about the potential for autonomous weapons to disproportionately impact civilian populations and exacerbate existing inequalities in warfare.

The rise of autonomous weapons represents a paradigm shift in warfare, with the potential to change the nature of conflict fundamentally. While these weapons could theoretically make warfare more precise and reduce human casualties, the risks they pose are too significant to ignore. As AI continues to advance, it is essential to prioritize ethical considerations and ensure that humans remain in control of lethal decision-making.

2. The Rise of AI-Powered Cybercrime:

The advent of AI has opened a Pandora’s box in the world of cybercrime, empowering malicious actors with sophisticated tools and techniques that are reshaping the digital threat landscape. AI-powered cybercrime is no longer a distant threat; it’s a rapidly evolving reality with far-reaching consequences.

Advanced Hacking and Phishing:

AI is revolutionizing the way cybercriminals launch attacks. AI-powered hacking tools can automate various stages of an attack, from reconnaissance and target selection to exploit development and execution. This automation makes attacks faster, more efficient, and harder to detect. For example, the 2023 “DarkBERT” incident demonstrated the potential of AI to craft highly convincing phishing emails tailored to individual targets, significantly increasing the likelihood of successful attacks.

Moreover, AI-powered malware is becoming more sophisticated and evasive. Traditional antivirus software, which relies on signature-based detection, struggles to keep pace with the rapidly evolving nature of AI-generated malware. This has led to a surge in “zero-day” attacks, where vulnerabilities are exploited before patches can be developed.

Deepfakes and Disinformation:

AI-generated deepfakes, realistic audio and video that convincingly mimic real people, are a growing concern in the realm of cybercrime. These deepfakes can be used to spread misinformation, manipulate public opinion, and even extort individuals. The 2022 “deepfake” video of Ukrainian President Volodymyr Zelenskyy surrendering is a stark reminder of the potential damage that deepfakes can inflict.

AI-powered disinformation campaigns are also becoming more sophisticated. AI can be used to generate and disseminate false or misleading information at scale, creating echo chambers and sowing discord in online communities. This has serious implications for democracy and public trust.

Emerging Threats:

FraudGPT: This AI tool, discovered on dark web marketplaces and Telegram channels in 2023, is specifically designed for offensive purposes. It enables cybercriminals to orchestrate sophisticated attacks, ranging from spear phishing to creating undetectable malware.

AI-Powered Ransomware: Ransomware attacks, where hackers encrypt a victim’s data and demand payment for its release, are becoming more prevalent and sophisticated. AI can be used to automate various aspects of these attacks, making them faster, more efficient, and harder to defend against.

3. Social Manipulation and Discrimination at Scale:

The rise of AI has brought about unprecedented advancements in data collection, analysis, and utilization. While these capabilities hold immense potential for good, they also pose a significant risk of social manipulation and discrimination at an unprecedented scale.

Surveillance and Privacy Erosion:

AI-powered surveillance systems, equipped with facial recognition and other biometric technologies, are becoming increasingly prevalent. These systems can track individuals’ movements, analyze their behaviors, and even predict their future actions. While proponents argue that such surveillance enhances security and public safety, critics raise alarms about the erosion of privacy and the potential for abuse.

China’s extensive surveillance network, which utilizes AI to monitor citizens’ online activities, social interactions, and even facial expressions, is a stark example of how this technology can be used to control and suppress dissent. The lack of transparency and accountability surrounding these systems raises concerns about the potential for misuse and abuse of power.

Algorithmic Bias and Injustice:

AI algorithms are not inherently neutral. They are often trained on biased data, which can lead to discriminatory outcomes. This has been observed in various domains, from criminal justice to hiring practices. For instance, a 2021 study found that a widely used healthcare algorithm was less likely to recommend black patients for additional care, even when they were equally sick as white patients. This algorithmic bias can perpetuate and exacerbate existing social inequalities, leading to unfair treatment and denial of opportunities.

In the realm of social media, AI algorithms are used to curate content and target advertising. While this can personalize user experiences, it can also create echo chambers and filter bubbles, where individuals are exposed only to information that aligns with their existing beliefs. This can lead to polarization and make it harder to find common ground on important issues.

Social Credit Systems and Digital Oppression:

AI-powered social credit systems, like the one in China, represent a new frontier of digital oppression. These systems track individuals’ behaviors, online and offline, and assign them a social credit score. This score can then be used to determine access to various services and opportunities, such as travel, education, and employment.

While the stated goal of these systems is to promote trustworthiness and good behavior, they raise serious concerns about privacy, autonomy, and the potential for social control. In China, individuals with low social credit scores have been denied access to certain services, such as high-speed trains and flights, and even barred from enrolling their children in certain schools. This kind of digital discrimination can have a devastating impact on individuals’ lives and limit their opportunities.

4. Job Displacement and Economic Disruption:

The rise of AI and automation has sparked widespread concern about its potential impact on employment and the broader economy. While technological advancements have historically led to shifts in the job market, the rapid pace of AI development has raised questions about the scale and speed of this disruption.

Automation’s Expanding Reach:

AI-powered automation is no longer confined to repetitive manual labor. It is increasingly encroaching on cognitive tasks that were once considered the exclusive domain of humans. For example, AI-powered chatbots are replacing customer service representatives, while AI-driven algorithms are taking over financial analysis and even legal research.

The Rise of the Machines: Real-World Examples:

- Self-checkout kiosks in supermarkets and retail stores have become commonplace, reducing the need for cashiers and sales associates.

- Automated customer service bots are increasingly handling customer inquiries, often replacing human agents altogether.

- In the transportation industry, self-driving trucks are being tested, raising concerns about the future of truck drivers.

- In the medical field, AI algorithms are being used to analyze medical images and diagnose diseases, potentially impacting the roles of radiologists and pathologists.

- Even creative fields, such as journalism and art, are being affected by AI, with algorithms capable of generating news articles and artworks.

The Widening Gap:

The concern is not just about the number of jobs being lost but also about the types of jobs being affected. While AI and automation are creating new jobs in fields like AI development, data analysis, and cybersecurity, these jobs often require specialized skills and education that many displaced workers lack. This can lead to a widening gap between those who benefit from the AI revolution and those who are left behind.

The Economic Fallout:

The widespread displacement of workers due to automation could have significant economic consequences. A decline in consumer spending due to job losses could trigger a recession. Additionally, governments may face increased pressure to provide social safety nets for those who have lost their jobs, putting a strain on public finances.

The Path Forward: Ethical AI Development

To mitigate the risks of AI and harness its potential for good, we must prioritize ethical AI development:

- Transparency and Explainability: AI systems should be transparent and explainable, allowing us to understand how they make decisions.

- Robustness and Safety: AI systems should be designed to be robust against adversarial attacks and failures, ensuring their safe operation.

- Fairness and Non-Discrimination: AI algorithms should be audited for biases and regularly updated to prevent discrimination.

- Human Oversight and Control: Humans should retain ultimate control over AI systems, with the ability to intervene when necessary.

- International Collaboration: Governments and organizations must work together to establish global norms and regulations for AI development and deployment.

The future of AI is not predetermined. It is a choice we make as a society. By proactively addressing the risks and embracing ethical principles, we can steer AI towards a future that benefits all of humanity.