Random Forest is an easy-to-use, supervised machine learning algorithm used for classification and regression problems. It can produce a great result, even without hyperparameter tuning.

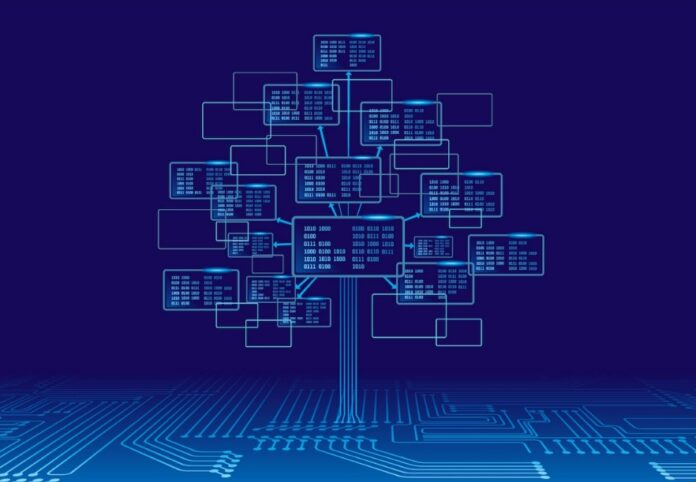

Developed by Leo Breiman, Random forest builds a forest with an ensemble of decision trees. As we know, a forest is made up of trees, and a more robust forest has more trees. Similarly, the random forest algorithm builds decision trees on data samples, obtains predictions from each one, and then uses voting to determine the best solution.

Because the ensemble method averages the results, it reduces over-fitting and is superior to a single decision tree. With the help of the subsequent steps, we can comprehend how the Random Forest algorithm functions: Start by choosing random samples from a pre-existing dataset. A decision tree will then be built by this algorithm for each sample. The prediction outcome from each decision tree will then be obtained. Voting will be conducted for each predicted outcome in the following step. Finally, choose the prediction result that received the most votes as the final prediction result.

Pros

The Random Forest algorithm has the following benefits:

- By averaging or combining the outputs of various decision trees, random forests solve the overfitting issue and can be used for classification and regression tasks.

- Random forests perform better for a wide range of data items than a single decision tree. Both categorical and numerical data can be used with it. In most cases, no scaling or transformation of the variables is required.

- The random forest has a lower variance than a single decision tree.

- Random forests automatically create uncorrelated decision trees and carry out feature selection. It constructs each decision tree using a random set of features to accomplish this. This makes it a great model for working with data with many different features.

- Random forests are extremely adaptable and highly accurate.

- The random forest algorithm does not require data scaling. Even after providing data without scaling, it still maintains good accuracy.

- The Random Forest algorithm maintains good accuracy despite a significant amount of missing data.

- Outliers do not significantly impact random forests. The variables are binned to achieve this.

- Both linear and non-linear relationships are well-handled by random forests.

Cons

The following are the Random Forest algorithm’s drawbacks:

- The main drawback of Random forest algorithms is complexity.

- Random forest construction is much more time- and labor-intensive than decision tree construction.

- The Random Forest algorithm requires more computational power to implement.

- It is less intuitive when we have a large number of decision trees.

- The random forest prediction process takes a long time compared to other algorithms.

- Random forests are difficult to interpret. They offer feature importance, but unlike linear regression, they do not offer full visibility into the coefficients.

- For large datasets, random forests can be computationally demanding.

- Because you have very little control over what the model does, random forest is similar to a “black box” algorithm.